NKOAPP is a specialized speech synthesis system that uses glottal and vocal tract parameters to generate realistic speech sounds. The system employs a pipeline architecture that processes parameters through various stages, from target configuration definition to final audio output, using the VocalTractLab API for the actual audio synthesis.

This document provides a comprehensive technical overview of the entire system, its architecture, primary components, and operational workflows. The system follows a modular architecture with distinct components handling specific parts of the speech synthesis pipeline.

The NKOAPP system follows a modular architecture with three main processing segments:

1. Configuration & Parameter Definition

Handles speaker data model (speakerJD2.json), glottal models, vocal tract shapes, and operational mode selection (manual/automatic).

2. Parameter Processing & Interpolation

Processes target sequences, applies spline interpolation, integrates f0 and intensity data, and generates tract sequences.

3. Audio Synthesis

Converts tract sequences to audio files using the VocalTractLab synthesis backend.

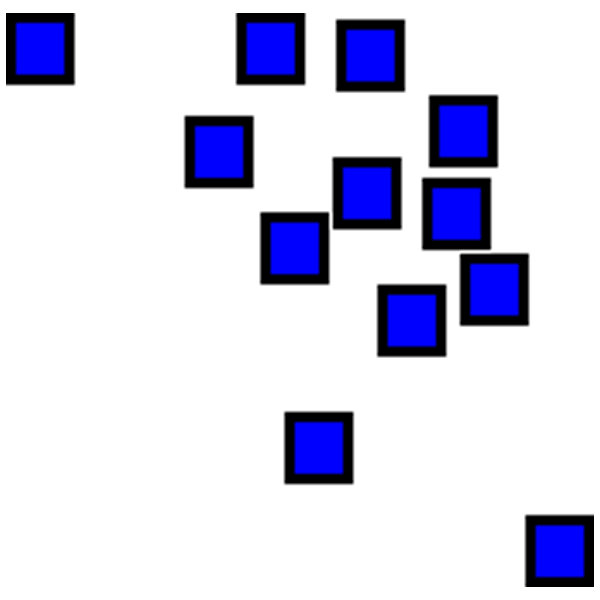

The following diagram illustrates the data processing workflow within the NKOAPP system:

This pipeline highlights how the system processes data from initial configuration to final audio output, with different paths based on the selected mode (manual or automatic).

1. NKOAPP.py (Main Orchestrator)

The main script that orchestrates the entire speech synthesis process. It:

- Initializes system parameters and constants

- Loads speaker configurations from JSON

- Manages operational mode selection

- Coordinates all processing modules

- Generates final tract sequence files

Operational Modes:

Manual Mode: Users define target sequences explicitly through glOption and trOption arrays with desired glottal modes and tract configurations, along with durModulation arrays for timing control.

Automatic Mode: The system uses f0 and intensity data from external files to determine target sequences and transitions automatically.

2. Glottal Models

The system supports three different glottal models, each with different parameters:

Geometric Model

10 parameters controlling fundamental geometric properties of vocal fold vibration

Two-Mass Model

7 parameters modeling the vocal folds as a coupled oscillator system

Triangular Model

5 parameters using a simplified triangular glottal pulse shape

The selection of the glottal model affects the number of parameters to be interpolated and the overall speech synthesis process. Each model offers different trade-offs between computational complexity and physiological accuracy.

3. NKOAPP_JSON_Reader.py

This module handles extracting and parsing data from the speakerJD2.json file, which contains configurations for both vocal tract and glottis parameters.

Key Functions:

4. NKOAPP_f0andIntensityExtractor.py

Provides functions for handling fundamental frequency (f0) and intensity data:

This module is essential for the automatic mode operation, providing the prosodic contour that drives the synthesis process.

5. NKOAPP_scripts.py

Contains the core interpolation and parameter processing functions:

These functions form the computational core of the system, transforming discrete target configurations into continuous parameter trajectories suitable for audio synthesis.

The system relies on a structured JSON file (speakerJD2.json) that contains all necessary configurations for the vocal tract and glottis models:

- Anatomical parameters: Physical dimensions of the vocal tract

- Vocal tract shapes: Predefined articulatory configurations for different phones

- Glottal configurations: Parameter sets for different phonation modes

- Default values: Baseline settings for all synthesis parameters

This centralized data model allows for easy modification of speaker characteristics and ensures consistency across the synthesis pipeline.

The final step in the synthesis process is converting the tract sequence file to audio using the VocalTractLab API:

Current Implementation:

Automated Implementation (Optional):

The VocalTractLab backend uses articulatory synthesis principles to convert the continuous parameter trajectories into acoustic waveforms, providing high-quality, physiologically-grounded speech synthesis.

NKOAPP supports multiple interpolation methods to control the smoothness and character of parameter transitions:

Cubic Spline Interpolation

Produces smooth, continuous trajectories with continuous first and second derivatives. Ideal for natural-sounding articulation.

Akima Spline Interpolation

Reduces overshoot and oscillation compared to cubic splines, providing more localized control over parameter trajectories.

Derivative Order Control

Each source-target pair can be differentiated to achieve cubic, squared, linear, or stepped curves. The choice significantly impacts whether a human quality is present in the output.

Sample Rate: 44.1 kHz (configurable)

Temporal Resolution: 2.5 ms per frame (110 frames at 44.1 kHz)

Vocal Tract Parameters: 17 parameters (see Table 1 on main NKOAPP page)

Glottis Parameters: 5-10 parameters (model-dependent)

Supported File Formats:

- Input: JSON (speaker data), TXT (f0/intensity)

- Output: TXT (tract sequences), WAV (audio)

1. Configuration Phase

- Edit speakerJD2.json with desired vocal tract shapes and glottal configurations

- Choose operational mode (manual or automatic)

- Select glottal model type

- Set interpolation method and temporal parameters

2. Target Definition Phase

- Manual: Define glOption, trOption, and durModulation arrays

- Automatic: Prepare f0 and intensity data files

3. Processing Phase

- Run NKOAPP.py

- System generates interpolated parameter trajectories

- Tract sequence file is created

4. Synthesis Phase

- Load tract sequence into VocalTractLab

- Synthesize audio output

- Export as WAV file

NKOAPP provides a flexible framework for speech synthesis based on physiological models of the vocal tract and glottis. Its modular design allows for both detailed manual control and automatic generation of speech parameters, making it suitable for research and experimental speech synthesis applications.

The system's strength lies in its ability to produce both highly naturalistic and deliberately unnatural vocalizations, making it particularly valuable for artistic and exploratory applications in voice synthesis and electroacoustic music composition.

Repository: github.com/hogobogobogo/NKOAPP